On March 25, 2009, a Bombardier DHC-8-402 operated by Japan Air Commuter Company departed from Tanegashima Airport in Tanegashima, Japan. While climbing, the crew heard an abnormal noise from the No. 1 engine, and their instruments indicated engine failure. The flight crew shut down the engine and requested an emergency landing at Kagoshima Airport. The aircraft landed successfully with no injuries.

Investigation

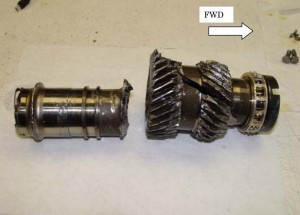

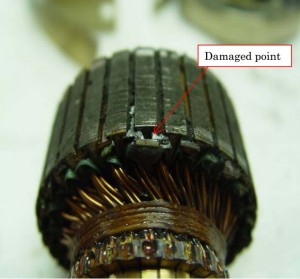

Fractured helical input gearshaft from Japan Air Commuter JA847C (Credits: Japan Transportation Safety Board).

The Japan Transport Safety Board found that a gearshaft on the No. 1 engine fractured and was detached from its position. The gearshaft failure was likely due to fatigue that started with impurities in the metal used to make the shaft. The fragments from the broken gearshaft flew off and damaged the engine case and breaking turbine blades. This damage caused loss of the engine. The investigation found that the risk assessment performed by the engine manufacturer was flawed. The risk assessment had identified this hazard, but had characterized the severity as “Significant,” meaning that the result would only be an engine in-flight shutdown (IFSD). However, this incident showed that the results of a gearshaft loss could be potentially catastrophic because of loss of all functions of propeller feather due to a defective feathering pump drive motor and blocked oil passages. A different classification of risk may have prompted additional quality control measures to check the integrity of the gearshaft. The report stated, “…the appropriateness of the risk assessment results for this serious incident is questionable, because it is based only on the engine IFSD. The risk assessment should be made in view of the safety of the entire aircraft, not of the engine alone.”

Risk Assessments

This incident points to the importance of risk assessment as part of the overall system safety process, and it illustrates how an inadequate risk assessment can lead to poor decision making. Risk assessment helps to understand the potential for harm in order to prioritize risks that need attention. The risk assessment process helps the decision maker in their risk decisions (e.g., accept the risk without change, reduce the risk to an acceptable level through hazard elimination and mitigation, transfer the risk to another entity such as an insurance company, or avoid the risk by foregoing the activity), and assists in justifying the acceptance of residual risk. Risk assessments can also be useful in making tradeoffs between different mitigation measures and design options. A failure to adequately assess the risk may result in an improper focus on low-risk items or inadequate implementation of safeguards. A risk assessment is an evaluation of those likelihoods and consequences. A risk assessment can either be qualitative or quantitative, although the emphasis in the system safety process is typically on qualitative risk assessment.

According to the National Aeronautics and Space Administration (NASA) Probabilistic Risk Assessment Guide, a risk assessment answers three questions:

- What can go wrong?

- How likely is it?

- What are the consequences?

The first question is answered by developing scenarios as part of the hazard analysis process. Once the problem has been identified through the hazard identification process, the likelihood and severity can be assessed. In most hazard analyses, qualitative judgments are made for the likelihood of occurrence of a mishap and the relative severity. Qualitative risk assessments are often performed using subjective definitions for severity and likelihood. Once the hazard severity and likelihood have been determined, the risk is typically classified in a risk assessment matrix, also known as a risk acceptance matrix. This risk assessment matrix provides a mechanism for defining whether a hazard meets predefined criteria for acceptance. In this way a decision maker can determine whether the risk is unreasonable and can be tolerated, whether additional measures are needed, or whether the activity should be canceled.

System safety emphasizes qualitative risk assessment over quantitative analyses. However, as was illustrated in the Japan Air Commuter incident, qualitative assessments alone can provide misleading results. Therefore, quantitative analyses are often used to support risk decision making. Probabilistic Risk Assessment (PRA) is one quantitative risk assessment technique used by NASA, the nuclear industry, the petrochemical industry, and other high consequence industries. PRA is a comprehensive, structured analysis method to identify and quantify risks in complex systems. PRA is quantitative in that probabilities of events with potential safety consequences are calculated as the product of event probabilities and the severity of their consequences. PRA is intended not only to quantitatively evaluate the reliability of components but also to provide quantitative assessments of human and software errors. Quantitative methodologies of course have their own limitations, and their use should be considered as only one component of the risk decision making process.

Qualitative and quantitative risk assessments are part of a broader risk decision making, risk management, and risk communication effort. Risk decision making seeks to make informed decisions among many alternatives based on safety, technical, cost, and schedule factors. The process of decision making is complex, and involves a diverse set of measures and considerations. The decision-making process becomes even more complex if the program has a high degree of schedule, cost, or technical uncertainly, as is the case in many space programs. In addition, there are always a diverse set of stakeholders and authorities with interests in the project. This decision-making process requires both objective technical input and subjective human judgment. The decision making should be formal, but managerial judgment considering multiple factors and analyses is still required. Risk assessments are an aid in the decision-making process, but should not be the only factor that enters into the final decision.

Summary

There is no doubt that system safety efforts will always be performed with a degree of uncertainty because of the human judgment involved. However, hazard risk assessments performed without an understanding of those uncertainties may provide results showing unrealistically low risks. Multiple approaches, including both qualitative and quantitative techniques, must be considered in the assessment of risk. Organizations should balance subjective judgment with objective data, and recognize that both are necessary to obtain an understanding of risk and prioritize efforts when resources are limited. An organization should have a questioning attitude toward its risk assessments, and should use the assessments as one of a number of tools to help improve its judgment and the safety of its programs and projects.

Reference: Japan Transport Safety Board, “Aircraft Serious Incident Investigation Report, Japan Air Commuter, JA847C,” Report No. AI2010-6, August 27, 2010.

![A trajectory analysis that used a computational fluid dynamics approach to determine the likely position and velocity histories of the foam (Credits: NASA Ref [1] p61).](https://www.spacesafetymagazine.com/wp-content/uploads/2014/05/fluid-dynamics-trajectory-analysis-50x50.jpg)

Leave a Reply