This is the second article in our series on the safety of autonomous robots. Catch up on the series introduction here.

By Louise Dennis, Michael Fisher, Sandor Veres, and Nick Lincoln

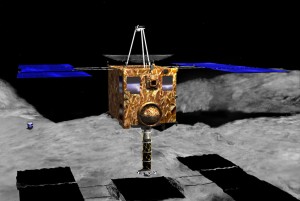

In 2003 the Japanese Aerospace Exploration Agency (JAXA) launched the Hayabusa spacecraft with a mission to study the Itokawa asteroid, including landing on the asteroid, taking samples, and returning with them to Earth. The mission faced multiple challenges, many related to the impossibility of real-time communication. For instance, the Minerva mini-lander was destroyed because the command to release it reached the spacecraft while it was at too high an altitude above the asteroid. There were also issues involved in understanding the autonomous actions taken by the spacecraft (e.g., a decision to abort one of its own landing attempts). JAXA lost all contact with the spacecraft for three months in 2006 following damage to its ion thrusters that caused it to move off course. Once rediscovered, a painstaking process ensued, sending it commands to slowly realign itself so that full communication could be restored. Despite these problems, Hayabusa successfully returned more than 1,500 grains of asteroid particles to Earth. However, the mission is illustrative of the complex problems inherent in remote exploration of our solar system.

The vast distances of space, and the communication delays they cause, create very real problems for the control of exploratory spacecraft. Even in Earth orbits, low bandwidths and the spacing of appropriate ground stations can mean a satellite is out of direct human control for significant periods of time. As a result, spacecraft need a significant degree of autonomy and the ability to react to unexpected events without the intervention of a human. An obvious concern is the accuracy of any decision-making software implemented on such systems. The construction of spacecraft and satellites is an expensive business, as is launching them. Hence, there is a natural reluctance to risk destruction of the craft because the autonomous software made a “stupid” decision that no human operator would have contemplated.

Limiting the autonomy of unmanned spacecraft clearly limits our ability to explore, and possibly exploit, the resources in our solar system. It also imposes constraints on, and reduces the flexibility for, the operation of orbital satellites. In particular it is challenging to operate such satellites in the close formations which may be needed for triangulation.

Improving AI Decision Making

Artificial Intelligence has long been concerned with the kinds of decision-making problems currently being faced by the space industry. However, the field’s success in the control of robots and other devices embedded in the real world has been limited. Early attempts at artificial intelligence based control of robotic systems foundered as scientists attempted to represent too much information at a high level about which the robot could reason using logic. This eventually led to the popularity of layered architectures in which most of the system operation was directly governed by feedback controllers and other fast reactive controls and only a small amount of information, if any, was ever made available to explicit reasoning systems.

Satellite frames at the Autonomous Spacecraft Testing Facility at the University of Southampton where this work was performed (Credits: University of Southampton).

We have implemented an agent-based approach to spacecraft control. This exists as a trial system that has been tested both in simulation and on a hardware testbed located at the University of Southampton. Our approach to the control of spacecraft follows the layered architecture approach. We assume that a spacecraft has capabilities for following paths and maintaining orbits which do not need the intervention of high level reasoning processes. Similarly we assume the availability of planning systems that can generate such paths and orbits. Our focus has been on extending the high level reasoning so that the spacecraft is capable of selecting particular paths and orbits for itself, negotiating with other spacecraft about formations and task allocation, reacting to unexpected problems by performing diagnostics on, for instance, its own internal thrusters, and reacting to emergencies (such as the detection of obstacles) by overriding other behavior to take evasive action.

We implemented this high level reasoning using the Beliefs, Desires, and Intentions (BDI) model of Agency. In a BDI program, reasoning procedures are represented in a high level fashion in terms of the beliefs the spacecraft has about its environment and its goals, which may be overall mission goals, or temporary subgoals. In our implementation, beliefs are abstractions of sensor data and represent statements such as “a thruster is broken” or “there is an obstacle in my path.” Such beliefs are not facts – it is always possible that broken or insufficiently sensitive sensors may mean that these beliefs are erroneous. The spacecraft can be interrogated after an event about the decisions it made, and it will be able to report the beliefs and goals that were present that led to the decision.

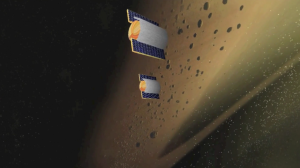

Screenshot of the autonomous spacecraft simulator set up (Credits: the Universities of Liverpool and Southampton).

The overall framework is event driven with, typically, a spacecraft’s high level reasoning represented as small plans provided by the programmer. These define the craft’s desired behavior when certain conjunctions of beliefs and goals hold.

One of the drawbacks of event-based programming is that it is difficult for a programmer to accurately predict program behavior when several events happen at once, or in close proximity to each other. It becomes important to know that evasive action will always be taken, even if other actions are required to, for instance, restart a thruster or negotiate tasks with other spacecraft. As an added difficulty, it is impossible to determine, for a spacecraft operating in a physical environment, all the possible inputs to sensors, nor to be certain about the ultimate effect of any action it takes.

Verifying Agent Decisions

We have developed a system which allows us to automatically verify the reasoning procedures implemented for our spacecraft. We avoid the problems mentioned above by working at the level of the beliefs that an agent may have. While this, therefore, cannot tell us everything about the overall operation of the system (for the beliefs are not facts), it does let us check that the event-based program will not be the component that causes mission failure. Similarly we only check that the spacecraft decides to take some particular action, not the effect the action will have. So we check that the satellite computes and then attempts to follow a path that will avoid a detected obstacle, not that no collision occurs.

Screenshot of the autonomous spacecraft simulator set up (Credits: the Universities of Liverpool and Southampton).

We use a technique known as program model-checking to exhaustively test all executions of the spacecraft’s decision-making program based on all the possible combinations of events/beliefs that may occur at any one time. We are then able to check that, no matter how many events are occurring concurrently, the spacecraft’s decisions remain in line with our safety parameters. We used the java-pathfinder model checking tool created by NASA to verify the behavior of spacecraft programs written in an event-based Beliefs-Desires-Intentions agent language which is implemented on top of Java. We have been able to verify the decisions of simulated satellites attempting to maintain a formation in low Earth orbit and spacecraft exploring an asteroid field.

The verification process revealed issues with the original code, primarily in situations where the beliefs arriving from a sensor changed rapidly and confusingly (e.g., a thruster keeps reporting it is broken, and then fixed). Such rapidly changing beliefs could be caused by loose connections or malfunctioning sensors. In these situations we found it was necessary to include failsafes in the code where, if the spacecraft decided it was receiving bad information from sensors, it would cease attempting complex maneuvers and await external instructions. This experience convinced us of the value of applying model-checking techniques to this kind of code base.

Screenshot of the autonomous spacecraft simulator set up (Credits: the Universities of Liverpool and Southampton).

The clear limitations of our verification approach – that it verifies only the decision-making program, not the processing of sensor data, nor the implementation of control systems – means that it is important that conventional testing and validation processes are pursued alongside model-checking of decision-making code. However we believe our technique provides a roadmap for the validation of comparatively sophisticated decision-making programs that may be added to a spacecraft’s software. The combination of verified code, constructed in a way that allows easy high-level understanding of decisions taken, provides a route to more ambitious missions both in Earth’s orbits and exploring the solar system.

Louise Dennis and Michael Fisher are researchers at the Centre for Autonomous Systems Technology, University of Liverpool. Sandor Veres and Nick Lincoln are researchers at the Autonomous Control Laboratory, University of Sheffield. They are supported by the EPSRC-funded project “Engineering Autonomous Space Software.” For more information visit: http://www.liv.ac.uk/CAST and http://www.robosafe.org/.

![A trajectory analysis that used a computational fluid dynamics approach to determine the likely position and velocity histories of the foam (Credits: NASA Ref [1] p61).](https://www.spacesafetymagazine.com/wp-content/uploads/2014/05/fluid-dynamics-trajectory-analysis-50x50.jpg)

Leave a Reply