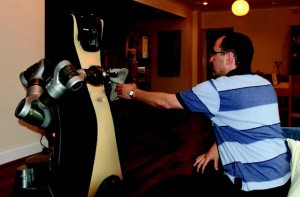

The Care-O-bot robotic assistant in the Robot House at the University of Hertfordshire, in use as part of the RoboSafe project (Credits: The University of Hertfordshire).

For years we have routinely used robots, and with great success: welding robots, paint spraying robots, washing machines, and automatic car washes, to name a few. The number of applications of robotic assistants is set to increase over the coming years with technology evolving from robots which are relatively stationary and limited in their activities to robots which move with (and among) us and assist us in our own wide-ranging activities.

The reasons why we may choose to use robotic assistants are many and varied. One example often given is the growth of aging populations in many developed countries. As standards of healthcare improve, people will live longer, but may also have longer periods of diminished ability or convalescence. Clearly there is a large economic impact of so many of us requiring regular or constant care. Therefore, robotic assistants (such as Fraunhofer IPA’s Care-O-bot ®) could be used to reduce the labor required in this care, while at the same time offering independence and dignity to our elderly, as it has been suggested that many people would rather ask for the assistance of a machine rather than a person for operations like getting dressed or getting out of bed.

One of the critical technologies driving this evolution is electro-mechanical assembly, which enables the creation of sophisticated robotic systems capable of intricate movements and precise interactions with their environment. Robots equipped with advanced electro-mechanical systems can navigate complex spaces, adapt to varying tasks, and provide personalized assistance tailored to individual needs. This capability not only enhances the efficiency of caregiving but also improves the quality of life for recipients by offering reliable and compassionate support. As robotics continues to integrate into everyday life, the potential for these technologies to revolutionize healthcare, eldercare, and beyond underscores their pivotal role in shaping a more inclusive and supportive future for all.

Robotic assistants have also been suggested for use by the military. For example, the Boston Dynamics LS3 project is aimed at creating a “robotic mule” which could carry equipment for ground-based personnel. Unlike a real mule, robots like LS3 could work around the clock, be remotely controlled or autonomous in their operations, be adapted to a wide range of environments from deserts to tundra, and be easily repaired. Crucially, an LS3 could be easily discarded or destroyed if necessary without concern for its welfare.

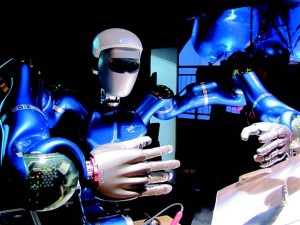

In 2011, Robonaut 2 began its mission assisting with tedious tasks aboard the International Space Station. In 2012 it was party to the first human-robot handshake in space, shared with Commander Dan Burbank (Credits: NASA).

Another potential application for robotic assistants is space exploration. One example is NASA’s Robonaut 2, currently onboard the International Space Station (ISS), which is designed to operate autonomously or by remote control. Robonaut 2 is an extensible platform that can be equipped with climbing legs for maneuvering in a microgravity environment, and could assist in routine maintenance tasks such as cleaning filters or vacuuming, thus giving human astronauts more time to dedicate to mission specific tasks. Another example is Japan’s Kirobo robot, also onboard the ISS. Kirobo is a 34 centimeter-tall “robot astronaut” that can communicate autonomously through speech recognition and synthesis. Kirobo will provide companionship and emotional support for astronaut Koichi Wakata during ISS Expedition 39. Robotic assistants could also be used for planetary and lunar surface missions. NASA has investigated the use of human-robot teams in a mockup Martian surface environment, in which autonomous rovers would follow astronauts and assist in geological survey missions.

Autonomous Safety

Clearly there are many potential applications of robotic assistants. However, it is vital to determine the safety and trustworthiness of these robotic assistants before they can be used effectively.

To-date, robotic systems have been limited greatly in their range and capacity for motion in order to increase their safety. Industrial welding robots, for example, will only work within a restricted area where people are not allowed during operations. Assuring the safety of robotic assistants will not be so easy, as robotic assistants will need to share our environments in a much more intimate way.

Robotic assistants will need to use autonomous systems to be able to cope with the unpredictability and complexity of our environments. Autonomous systems are those that decide for themselves what to do in a given situation. A robotic assistant used to assist someone during convalescence, for example, would need to identify when the person has fallen asleep and adjust its behavior so that it does not disturb them. These autonomous systems, however, present an additional challenge for safety and trustworthiness: how do we know that the decisions the autonomous robotic assistants will make are safe? In the case of the healthcare robotic assistant, how can we ensure that the person has fallen asleep or has fallen unconscious? Will the robot respond appropriately in each case?

Kirobo, launched to the International Space Station in August 2013, is the first caretaker robot in space. The anime-like creation is intended to assist Japanese astronaut and Expedition 39 Commander Koichi Wakata to maintain psychological well-being through communication (Credits: Toyota).

It is possible to devise sensor systems that could determine when someone is asleep or unconscious by examining the position and movements of the body. It is also possible to devise actuators that would respond in the right way in each case: going into a quiet-mode for the former, alerting the emergency services for the latter. However, there is an autonomous decision-making process which must also be taken into account. An autonomous robotic assistant is likely to have to analyze and interpret a lot of information and make rational decisions based on it. The robot will monitor a wide range of sensor systems in order to determine the current state of its environment, and it will have numerous competing objectives within that environment. For this reason, determining whether the robot will always raise the alarm if the person is unconscious is an inherently complex problem. For example, the robot may decide to raise the alarm, but before it can do so it decides to conduct a preplanned maintenance activity instead. Clearly, this is an undesirable situation. Ideally we would identify this potential bug before the robot is deployed, thus enabling the bug to be corrected and assuring the safety of the person in advance. In other words, we would like to be able to verify the autonomous system in control of the robot before deployment.

Verification of Autonomous Systems

Autonomous systems must be verified just like sensors, actuators, and other hardware. Since they are based on computer programs, we can use formal verification to increase our confidence that they are safe and reliable.

Space Justin hasn’t made it to space yet, but it’ll get there some day. Designed by German space agency DLR, Justin is built for teleoperation using a virtual reality-type interface. Its multi-directional joints help the robot more closely mimic human movements (Credits: DLR).

Formal verification uses mathematical proofs to determine that a system has a given property. Properties are typically based on system requirements, like: “The autonomous system will always request permission from the user before taking some action.” One form of formal verification is model checking, which works by exhaustively analyzing every possible execution of a program or protocol. Formal verification is useful for assessing the safety of autonomous robotic assistants, but other methods can be used for the lower-level control systems that control the robot’s actuators.

Simulation-based testing can be used to build accurate physical simulations of the robot’s mechanics, The simulated robot is then placed in a virtual environment where elements are randomly varied to analyze the response of the system and determine its reliability. For example, a simulation can be used to reproduce a scenario in which a robotic gripper lifts a delicate rock sample on Mars. This scenario could then be simulated many thousands of times in order to determine how often the gripper’s control system succeeds in grasping the rock properly.

The Human Factor

It is also useful to determine whether end-users of autonomous robotic assistants will trust autonomous robotic assistants within their target environments. Astronauts will of course be reluctant to use a robotic assistant onboard a spacecraft if they do not trust it. This reluctance could result in underutilization of an important resource – the robotic assistant itself – and have possible impacts on mission success. End-user validation testing involves testing the robots within realistic environments and situations in order to determine that the robot is fit-for-purpose. This technique can be used to determine whether characteristics like a robot’s appearance, gestures, and motion are sufficient to let end-users trust their robotic assistants.

Synchronized Position Hold, Engage, Reorient, Experimental Satellites, or SPHERES, may not be humanoid but that doesn’t mean they can’t be helpful. These bowling ball sized robots are being used to perform environmental monitoring and maintenance aboard the International Space Station (Credits: NASA).

Investigations are currently underway in the UK to use formal verification, simulation-based testing, and end-user validation to provide a holistic approach to determining the safety and trustworthiness of autonomous robotic assistants. The £1.2 million EPSRC-funded “Trustworthy Robotic Assistants” project will use state of the art facilities and techniques at the Universities of Bristol, Hertfordshire, Liverpool and the West of England to develop tools and techniques for the verification and validation of autonomous robotic assistants. The testing will include robots in the home, healthcare environments and manufacturing scenarios at first, but the lessons learned on the project will be useful to researchers and engineers wishing to develop robotic assistants for all kinds of environments, including space.

Autonomous robotic assistants are set to transform many aspects of life on Earth and beyond, as long as we can be assured that our robotic assistants are safe for use, reliable in their activities, and worthy of our trust. Tools and techniques are already being developed to provide these assurances so that society may receive the full benefit of these new autonomous robotic technologies.

Matt Webster, Clare Dixon, and Michael Fisher are researchers at the Centre for Autonomous Systems Technology, University of Liverpool. They are supported by the EPSRC-funded project “Trustworthy Robotic Assistants.” For more information visit: http://www.liv.ac.uk/CAST and http://www.robosafe.org/.

This is the introduction to a series of articles on safety of autonomous robots. It first appeared in the Winter 2014 issue of Space Safety Magazine.

![A trajectory analysis that used a computational fluid dynamics approach to determine the likely position and velocity histories of the foam (Credits: NASA Ref [1] p61).](https://www.spacesafetymagazine.com/wp-content/uploads/2014/05/fluid-dynamics-trajectory-analysis-50x50.jpg)

Leave a Reply