Do we really need to wait for accidents before we write a safety standard? System safety engineering answers that question with an emphatic NO. Contrary to popular belief, potential accidents in engineering can be identified in advance, classified in terms of their consequences and likelihood, and mitigated properly.

But what exactly is an accident?

Accidents and Potential Accidents

In any accident there are causes leading to a top-event from which consequences descend. For example, take a frontal collision of two cars. The collision is the top-event, but what kills or injures people is not the collision in itself but its consequences: traumas from abrupt decelerations, flying fragments, deformation of the passenger compartment, and so on. And what can cause a collision? The causes of a car collision could be things like human error, driver impairment, or mechanical failure.

Learning from errors is very much a natural mechanism by which the human brain and cognitive structures develop. At societal level, safety rules and codes often represent the collective memory of (painful) experiences gained over a period of time, sometimes a very long period, like the case for shipbuilding or aviation. In the engineering of hazardous and high-energy systems, history came to a point where waiting to learn from experience was not a viable option.

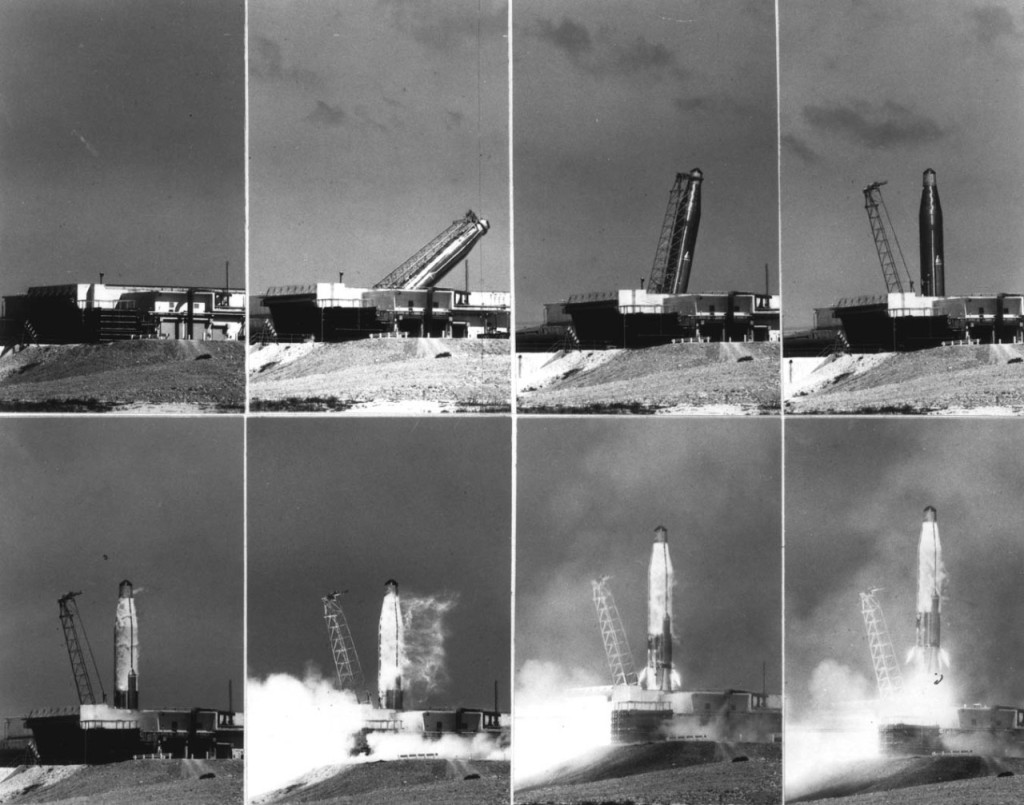

When Atlas and Titan Intercontinental Ballistic Missiles (ICBMs) carrying nuclear warheads were initially developed in the 1950s there was no safety program. The systems were new and there was no previous experience. Within 18 months after the fleet of missiles became operational, four blew up in their silos during operational testing. The worst accident occurred in Searcy, Arkansans on August 9, 1965, when a fire in a Titan II silo killed 53 people.

Atlas-D ICBM launching from semi-hardened “coffin” bunker at Vandenberg AFB, California. – Credits: US Air Force.

Hazard Analysis: Prevention, Not Reaction

The incident sparked a re-evaluation of nuclear missile handling, with the realization that a country could not afford the luxury of a nuclear disaster to learn safe handling practices.

In general, the conditions leading to a potential accident are relatively easy to identify, and from such consideration a technique was devised that took the name of ‘hazard analysis’. Basically hazard analysis postulates the scenario of a credible top-event and takes the necessary measures to lower the risk (consequence severity + probability) to an acceptable level.

It is through the massive use of hazard analyses that safety has been designed into the International Space Station, including newly introduced commercial vehicles like SpaceX Dragon.

The professional experience of designers involved in the development of new suborbital vehicles seems to be essentially rooted in aviation where hazard analysis is not a common tool while implementing prescriptive requirements based on lessons learned from past accidents and close calls is the rule. Suborbital vehicles designers often maintain that no safety requirement can be levied on industry until sufficient operational experience is accumulated, several years, perhaps decades from now.

Before further analyzing such a misplaced assertion, we should consider a few truths. System safety is an emerging property of a system that depends on hardware, software, human in the loop, environment, and the way operations are performed. Making the hardware reliable is not enough to ensure safety. A robust cutlery knife, for example, is very safe in the hands of an adult, potentially dangerous in those of a toddler, a deadly weapon in the hands of a criminal. A design that is safe in one environment is not safe in another one.

Hardware and software can be designed to the best of our knowledge, but our knowledge is not perfect. We can apply the most rigorous quality control during manufacturing, but perfect construction does not exist and some defective items will be built and escape inspection. A safe system is one that, through additional margins (called safety factors), redundancies, and barriers, will “tolerate“ (to a certain extent) failures and human errors such to prevent or mitigate harmful consequences. The table below shows the top risks of suborbital vehicles. What you need next is to establish a safety policy.

What does safety policy mean? It defines how large your safety margins should be, or how many failures an equipment in your system should be able to tolerate. Safety policy is a matter of choice, in other words a policy to be established before executing your project. To set your safety policy you must take into consideration and balance your final safety goals (i.e. acceptable risk levels) and other project constraints like mass, cost, and so on.

Kitchen knives: useful tools, dangerous hazards, or fatal weapons? Irrespective of how well designed they are, kitchen knives’ ultimate safety lay in whoever use them. – Credits: Nick Wheeler

Do we Need Accidents?

The simple definition of safety is “the condition of being free from accidents.” Tools like hazard analysis help identifying the possible causes of accidents and provide adequate mitigation.

Of course, hazard analysis presents an upfront cost that some operators may be unwilling to take. “If you believe that safety is expensive, try an accident,” use to say Jerome Lederer, father of aviation safety and the first head of safety at NASA. In the aftermath of the SpaceShipTwo accident, these wise words resound more than ever.

In the table below, suborbital vehicles top-risks. By combining the columns, all current vehicles configurations are addressed. For example the top risks of an air launched winged suborbital vehicle like SpaceShipTwo are collectively those of columns (b) + (c) + (d)

Risk/Design |

(A) Capsule | (B) Air Launched | (C) Rocket Propulsion |

(D) Winged System |

| Carrier malfunction | X | |||

| Explosion | X | |||

| Launcher malfunction | X | |||

| Inadvertent release or firing | X | |||

| Loss of pressurization | X | X | ||

| Loss of control at reentry | X | |||

| Parachute system failure | X | |||

| Crash landing | X | |||

| Escape system failure | X | |||

| Falling fragments (catastrophic failure) | X | |||

| Leaving segregated airspace | X | X | ||

| Atmospheric pollution | X |

Note: This article is the excerpt from a lecture given by Tommaso Sgobba, IAASS Executive Director, at the 7th IAASS international space safety conference “Space Safety is NO Accident”, 20-22 October 2014, in Friedrichshafen

![A trajectory analysis that used a computational fluid dynamics approach to determine the likely position and velocity histories of the foam (Credits: NASA Ref [1] p61).](https://www.spacesafetymagazine.com/wp-content/uploads/2014/05/fluid-dynamics-trajectory-analysis-50x50.jpg)

The graph is completely inadequate and incorrect, raising questions about the competency of the author. In addition, the proper question here is, “Do we need suborbital human spaceflight at all?” It has all the risks of orbital flight with few of the rewards, other than passenger revenue. Other than balloon flight, suborbital flight has few scientific rewards other than the testing of equipment for orbital flight. Suborbital flight is likely to be highly polluting in an area of the atmosphere that can hold pollutants for very long periods of time. If the suborbital market grows to any significant level it will only happen because of human demand, and that demand should be preceded by studies to estimate the environmental damage.